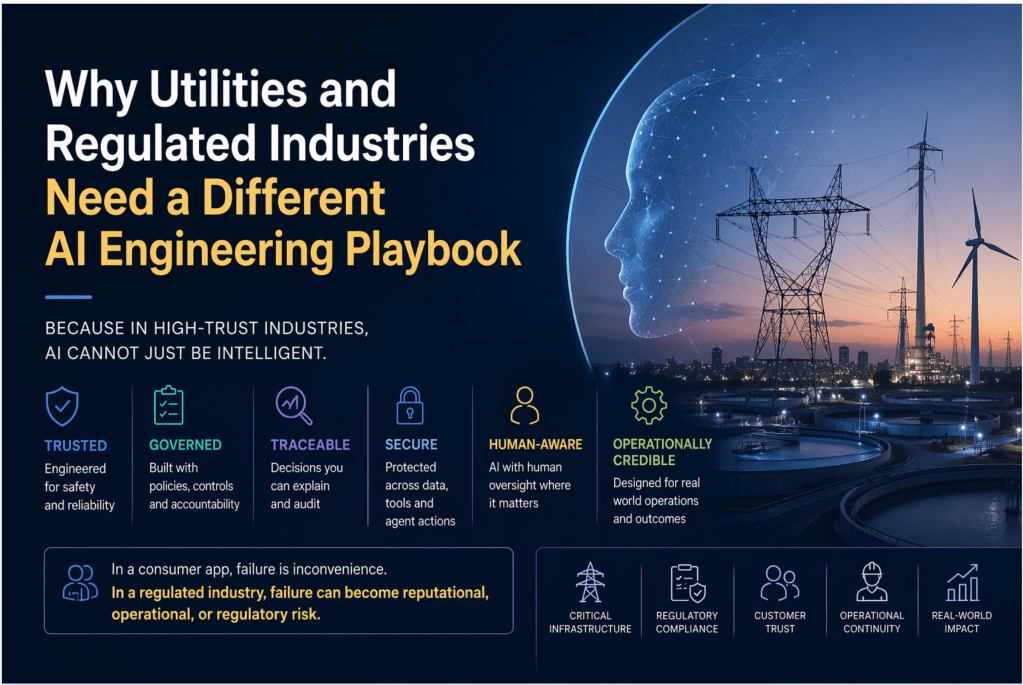

Because in high-trust industries, AI cannot just be intelligent. It has to be governed, traceable, secure, and operationally credible.

Most AI engineering conversations today assume that every industry can adopt AI in roughly the same way.

Build fast.

Experiment widely.

Ship early.

Improve as you go.

That logic works well in low-risk digital environments.

But utilities and regulated industries do not operate in that world.

They operate in a world of critical infrastructure, public trust, compliance obligations, operational resilience, customer vulnerability, and real-world consequences. Here, AI cannot simply be impressive. It has to be dependable.

That is why utilities and regulated industries need a different AI engineering playbook.

In a consumer app, failure is inconvenience.

In a regulated industry, failure can become reputational, operational, or regulatory risk.

And that single difference changes everything.

The problem with applying generic AI thinking to regulated industries

Much of today’s AI engineering language is still shaped by the consumer internet.

It is built around speed, scale, autonomy, iteration, and user growth. It assumes failure can be tolerated, corrected, and absorbed over time.

But utilities do not have that luxury.

A power utility cannot afford an AI assistant giving inconsistent advice during an outage.

A water company cannot allow an agent to invent compliance guidance.

A gas or energy retailer cannot let a customer-facing AI system mishandle a vulnerable customer case.

A regulated operator cannot deploy autonomous intelligence into critical workflows without clear control, traceability, and accountability.

This is where the AI conversation has to mature. The real question is no longer, can AI do this?

The real question is, can AI do this safely, consistently, transparently, and in a way the business is willing to stand behind?

That is not just a model question. That is an engineering question.

Utilities are not just another AI vertical

Utilities and regulated sectors are fundamentally different operating environments.

They are asset-heavy.

They are process-intensive.

They are highly regulated.

They depend on long-lived legacy systems.

They manage safety-sensitive operations.

They serve millions of customers who rely on continuity, fairness, and resilience.

That means AI in these industries is not being introduced merely to write better emails or create smarter chat experiences.

It is being introduced into environments that touch:

- outage response

- field operations

- billing and tariff logic

- customer vulnerability

- asset performance

- engineering design

- market processes

- compliance reporting

- work management

- service continuity

This is not just productivity AI. This is increasingly operational AI.

And the moment AI starts influencing operations, the bar rises dramatically.

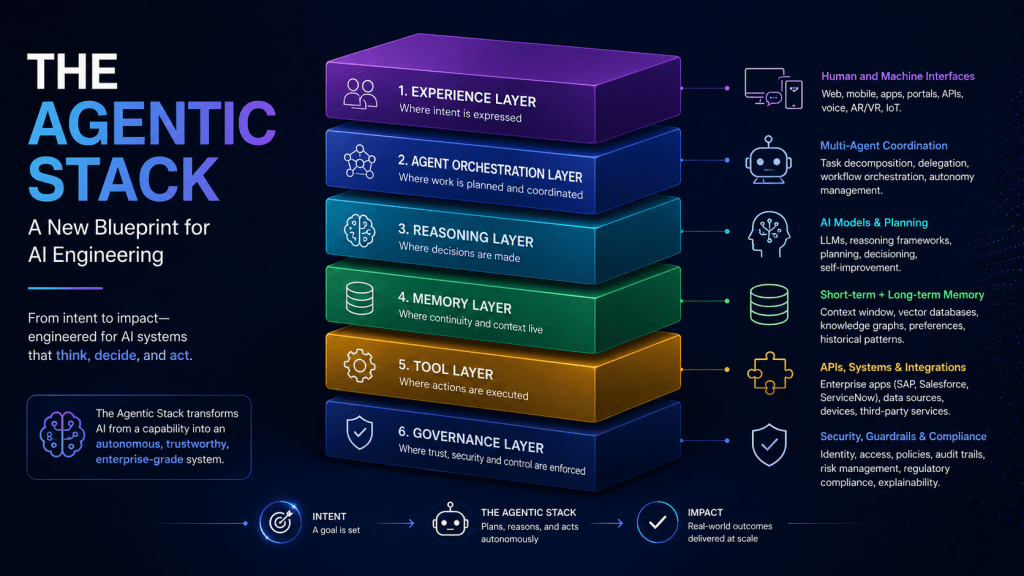

In regulated industries, the model is not the product

One of the biggest misconceptions in the market is that success in AI comes down to choosing the right model.

Of course, models matter.

But in utilities and other regulated industries, the model is only one component. Often, it is not even the hardest one.

The real challenge is building a complete system around the model:

- how it is grounded in enterprise data

- what tools it can access

- which actions require approval

- how its outputs are validated

- what happens when confidence is low

- how it is monitored

- how it fails safely

- how it integrates with core enterprise platforms

That is where AI engineering becomes real. In these industries, the model is not the product. The real product is the system of trust built around the model. That system includes guardrails, context, workflows, observability, governance, security, and human accountability.

Without that, what you have is not enterprise AI.

You have a demo.

The first principle: engineer for trust, not freedom

Much of the AI world celebrates freedom.

Let the agent reason.

Let it decide.

Let it act.

Let it self-correct.

That may sound exciting. But in regulated industries, freedom without control is not innovation. It is risk.

The first design principle for utilities should be simple:

Do not engineer AI for freedom first. Engineer it for trust first.

That changes the entire playbook.

The goal is not to create the most autonomous system possible.

The goal is to create the most dependable system possible.

Sometimes that will mean high automation.

Sometimes that will mean constrained agents.

Sometimes that will mean intelligent co-pilots with clear approval gates.

That is not a limitation.

That is mature engineering.

Grounding matters more than fluency

One of the most deceptive qualities of modern AI is that fluency feels like accuracy.

A polished answer can create the illusion of truth.

In regulated industries, that illusion is dangerous.

Utilities do not need systems that merely sound confident. They need systems grounded in:

- approved policy

- trusted enterprise data

- operational procedures

- engineering rules

- system-of-record context

- current regulatory guidance

A fluent hallucination is still a hallucination.

If an AI assistant explains tariff eligibility incorrectly, that matters.

If an agent references an outdated field procedure, that matters.

If a compliance response is well written but wrong, that matters even more.

In regulated environments, evidence beats eloquence.

The strongest AI systems will not be the ones that speak most beautifully. They will be the ones most deeply grounded in the truth of the enterprise.

Human-in-the-loop is not a weakness

There is a tendency in the market to treat human oversight as though it slows AI down.

I believe that is the wrong way to look at it.

In utilities and regulated industries, human-in-the-loop is often what makes AI deployable.

A field recommendation may need engineer validation.

A compliance response may need policy sign-off.

A vulnerable customer interaction may need escalation.

An operational action may require formal approval before execution.

This does not mean AI has failed.

It means AI has been designed responsibly.

The future in high-trust industries will not always be fully autonomous agents roaming freely across enterprise systems.

Much of the real value will come from well-governed co-pilots and constrained agents operating within clearly defined boundaries.

That is not less ambitious.

That is far more credible.

If you cannot trace it, you cannot trust it

Observability is becoming one of the defining disciplines of AI engineering.

In traditional software, observability tells you if a system is slow, broken, or overloaded.

In AI systems, observability must go much further.

You need to know:

- what instruction was used

- what context was retrieved

- what sources shaped the answer

- what tools were invoked

- what path the system followed

- where uncertainty emerged

- what the user actually saw

- whether the outcome met the expected standard

In utilities, this is not optional.

If an AI system recommends the wrong next step in an outage scenario, the business needs to reconstruct why.

If a regulator questions a conclusion, there must be an auditable trail.

If customers receive inconsistent answers, teams need a way to inspect, explain, and improve the system.

Black-box AI does not belong in high-trust industries.

If you cannot trace it, you cannot trust it.

And if you cannot trust it, you cannot scale it.

Security and governance must be built in from day one

Too many organisations still treat AI governance as something to add later.

That approach will not survive in regulated sectors.

AI introduces a different attack surface and a different control challenge.

Now you are dealing with:

- prompts

- memory

- tool permissions

- data connectors

- agent identities

- hidden instructions

- retrieval pathways

- sensitive context

- action boundaries

That means AI engineering in utilities must include:

- role-based access

- environment separation

- policy enforcement

- auditability

- data minimisation

- secure tool orchestration

- governance over prompts, agents, and knowledge sources

- monitoring for misuse or abnormal behaviour

This is why AI engineering is no longer just an innovation topic.

It is becoming part of the enterprise control architecture.

That is a major shift, and many organisations have not fully realised it yet.

Utilities need AI that understands the real world

Utilities sit at the intersection of digital systems and physical reality.

Assets age.

Pipes leak.

Transformers fail.

Storms disrupt service.

Field crews move.

Demand shifts.

Customers depend on continuity.

Regulatory obligations shape what is possible.

This is not a purely digital environment.

That is why the future of AI in utilities cannot stop at document chat or internal Q&A.

It has to connect language intelligence with operational context.

That includes systems that can work across:

- asset records

- outage workflows

- GIS and network context

- work orders

- maintenance history

- customer operations

- field service platforms

- engineering standards

- compliance frameworks

- enterprise applications

The real frontier is not simply a chatbot answering questions.

The real frontier is AI becoming part of the decision fabric of the utility.

That is where engineering discipline matters most.

The winners will not be the ones with the most pilots

Right now, many enterprises are still measuring progress in pilots.

How many copilots launched.

How many use cases identified.

How many proofs of concept running.

How many teams experimenting.

That phase is useful. But it is not enough.

Because pilots do not create transformation. Engineering does.

The winners in utilities and regulated industries will not be the firms with the noisiest AI story. They will be the firms that learn how to build AI that survives contact with enterprise reality.

They will:

- choose the right use cases

- ground AI in trusted data

- integrate with real workflows

- put controls around autonomy

- design safe escalation paths

- measure outcomes rigorously

- keep humans accountable

- earn trust one deployment at a time

In other words, they will stop treating AI as a demo layer and start engineering it as enterprise infrastructure.

That is the real shift now underway.

Final thought

We are moving beyond the phase where AI success is judged by how clever the model appears.

The next phase will be defined by a harder question:

Can this system be trusted in the real world?

That question matters in every industry.

But it matters most in utilities and regulated sectors.

Because these industries cannot afford AI that is merely impressive.

They need AI that is grounded, governed, observable, secure, resilient, and operationally credible.

That is why utilities and regulated industries need a different AI engineering playbook.

In a consumer app, failure is inconvenience.

In a regulated industry, failure can become reputational, operational, or regulatory risk.

And that is why the winners will not be the organisations with the loudest AI story.

They will be the ones that learn how to engineer AI that regulators can trust, operators can use, and customers can rely on.

Leave a comment